AI makes workers more productive, but we are still lacking in regulations, according to new research. The 2024 AI Index Report, published by the Stanford University Human-Centered Artificial Intelligence institute, has uncovered the top eight AI trends for businesses, including how the technology still does not best the human brain on every task.

TechRepublic digs into the business implications of these takeaways, with insight from report co-authors Robi Rahman and Anka Reuel.

SEE: Top 5 AI Trends to Watch in 2024

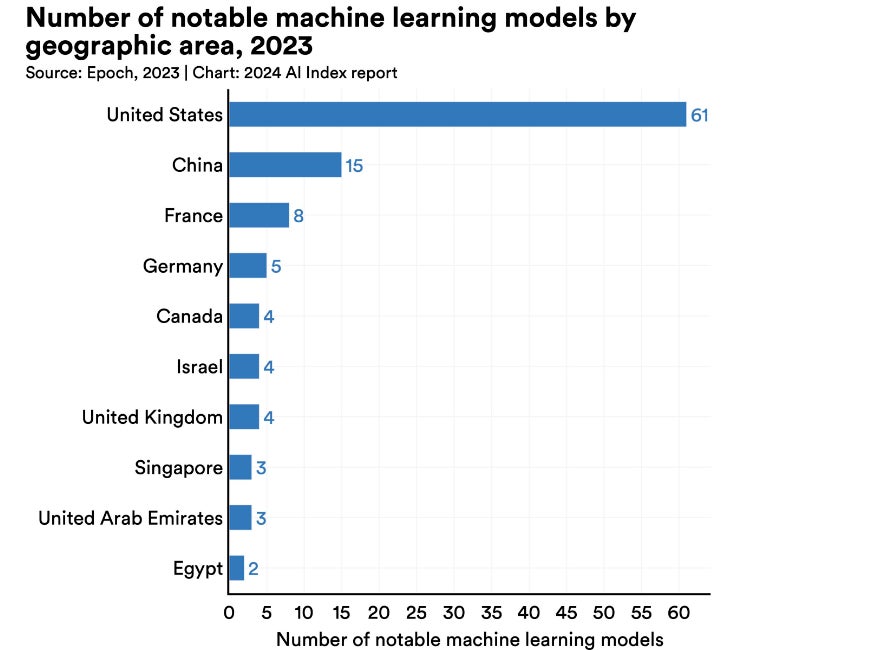

According to the research, AI is still not as good as humans at the complex tasks of advanced-level mathematical problem solving, visual commonsense reasoning and planning (Figure A). To draw this conclusion, models were compared to human benchmarks in many different business functions, including coding, agent-based behaviour, reasoning and reinforcement learning.

Figure A

While AI did surpass human capabilities in image classification, visual reasoning and English understanding, the result shows there is potential for businesses to utilise AI for tasks where human staff would actually perform better. Many businesses are already concerned about the consequences of over-reliance on AI products.

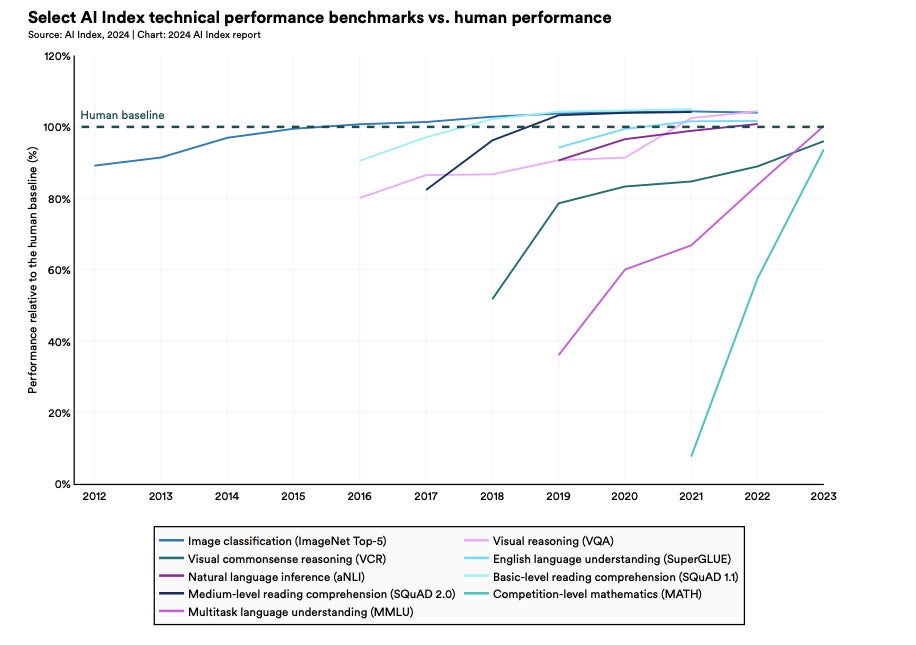

The AI Index reports that OpenAI’s GPT-4 and Google’s Gemini Ultra cost approximately $78 million and $191 million to train in 2023, respectively (Figure B). Data scientist Rahman told TechRepublic in an email: “At current growth rates, frontier AI models will cost around $5 billion to $10 billion in 2026, at which point very few companies will be able to afford these training runs.”

Figure B

In October 2023, the Wall Street Journal published that Google, Microsoft and other big tech players were struggling to monetize their generative AI products due to the massive costs associated with running them. There is a risk that, if the best technologies become so expensive that they are solely accessible to large corporations, their advantage over SMBs could increase disproportionately. This was flagged by the World Economic Forum back in 2018.

However, Rahman highlighted that many of the best AI models are open source and thus available to businesses of all budgets, so the technology should not widen any gap. He told TechRepublic: “Open-source and closed-source AI models are growing at the same rate. One of the largest tech companies, Meta, is open-sourcing all of their models, so people who cannot afford to train the largest models themselves can just download theirs.”

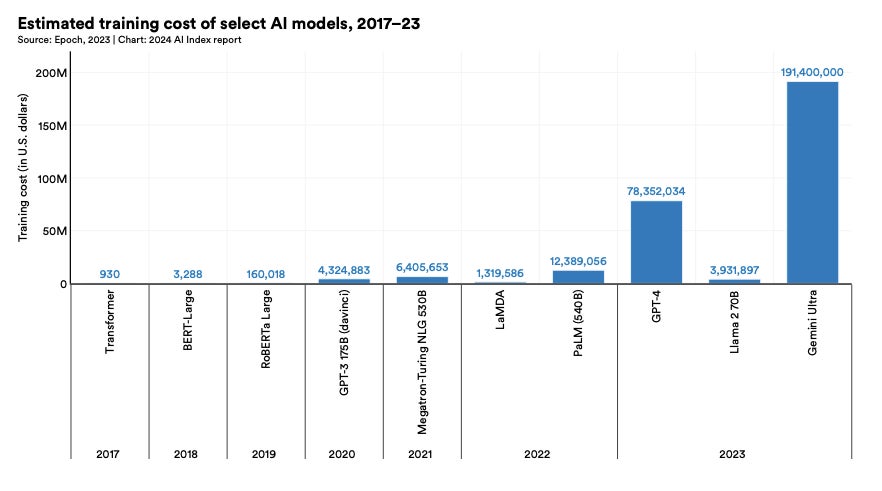

Through evaluating a number of existing studies, the Stanford researchers concluded that AI enables workers to complete tasks more quickly and improves the quality of their output. Professions this was observed for include computer programmers, where 32.8% reported a productivity boost, consultants, support agents (Figure C) and recruiters.

Figure C

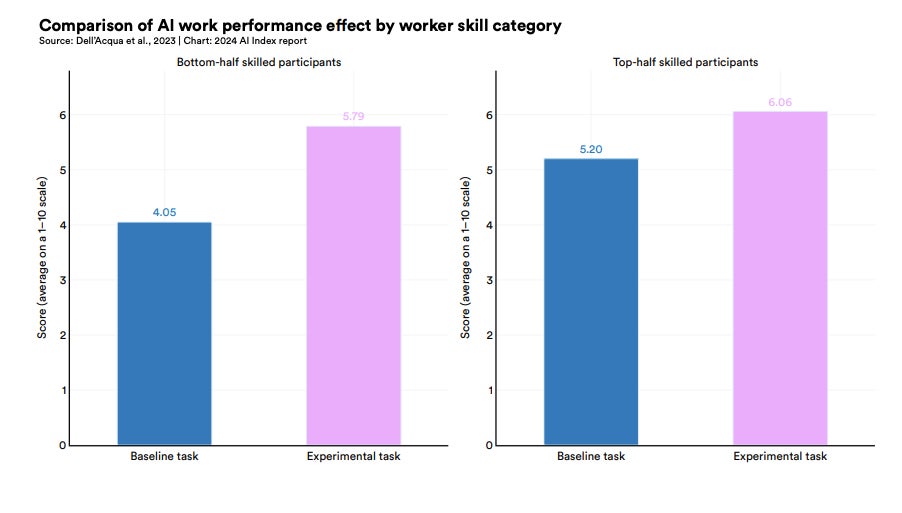

In the case of consultants, the use of GPT-4 bridged the gap between low-skilled and high-skilled professionals, with the low-skilled group experiencing more of a performance boost (Figure D). Other research has also indicated how generative AI in particular could act as an equaliser, as the less experienced, lower skilled workers get more out of it.

Figure D

However, other studies did suggest that “using AI without proper oversight can lead to diminished performance,” the researchers wrote. For example, there are widespread reports that hallucinations are prevalent in large language models that perform legal tasks. Other research has found that we may not reach the full potential of AI-enabled productivity gains for another decade, as unsatisfactory outputs, complicated guidelines and lack of proficiency continue to hold workers back.

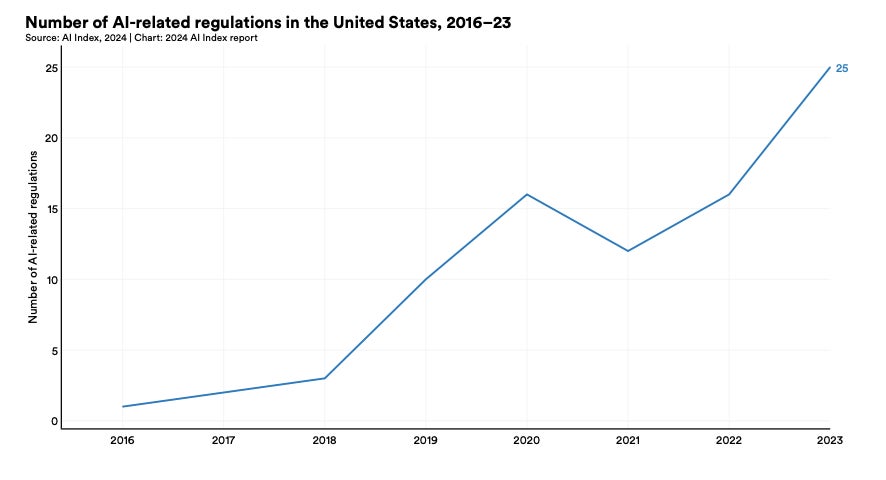

The AI Index Report found that, in 2023, there were 25 AI-related regulations active in the U.S., while in 2016 there was only one (Figure E). This hasn’t been a steady incline, though, as the total number of AI-related regulations grew by 56.3% from 2022 to 2023 alone. Over time, these regulations have also shifted from being expansive regarding AI progress to restrictive, and the most prevalent subject they touch on is foreign trade and international finance.

Figure E

AI-related legislation is also increasing in the EU, with 46, 22 and 32 new regulations being passed in 2021, 2022 and 2023, respectively. In this region, regulations tend to take a more expansive approach and most often cover science, technology and communications.

SEE: NIST Establishes AI Safety Consortium

It is essential for businesses interested in AI to stay updated on the regulations that impact them, or they put themselves at risk of heavy non-compliance penalties and reputational damage. Research published in March 2024 found that only 2% of large companies in the U.K. and EU were aware of the incoming EU AI Act.

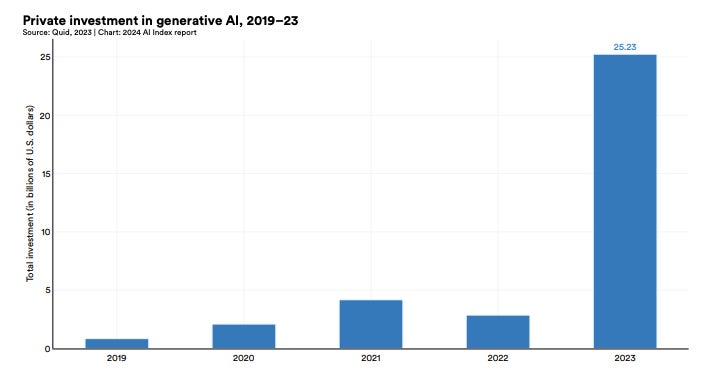

Funding for generative AI products that generate content in response to a prompt nearly octupled from 2022 to 2023, reaching $25.2 billion (Figure F). OpenAI, Anthropic, Hugging Face and Inflection, amongst others, all received substantial fundraising rounds.

Figure F

The buildout of generative AI capabilities is likely to meet demand from businesses looking to adopt it into their processes. In 2023, generative AI was cited in 19.7% of all earnings calls of Fortune 500 companies, and a McKinsey report revealed that 55% of organisations now use AI, including generative AI, in at least one business unit or function.

Awareness of generative AI boomed after the launch of ChatGPT on November 30, 2022, and since then, organisations have been racing to incorporate its capabilities into their products or services. A recent survey of 300 global businesses conducted by MIT Technology Review Insights, in partnership with Telstra International, found that respondents expect their number of functions deploying generative AI to more than double in 2024.

SEE: Generative AI Defined: How it Works, Benefits and Dangers

However, there is some evidence that the boom in generative AI “could come to a fairly swift end”, according to leading AI voice Gary Marcus, and businesses should be wary. This is primarily due to limitations in current technologies, such as potential for bias, copyright issues and inaccuracies. According to the Stanford report, the finite amount of online data available to train models could exacerbate existing issues, placing a ceiling on improvements and scalability. It states that AI firms could run out of high-quality language data by 2026, low-quality language data in two decades and image data by the late 2030s to mid-2040s.

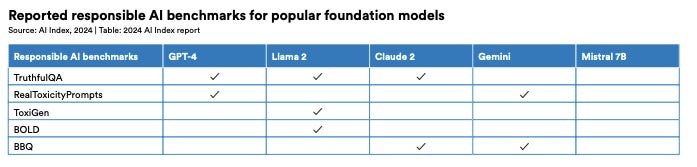

There is significant variation in the benchmarks that tech companies evaluate their LLMs against when it comes to trustworthiness or responsibility, according to the report (Figure G). The researchers wrote that this “complicates efforts to systematically compare the risks and limitations of top AI models.” These risks include biassed outputs and leaking private information from training datasets and conversation histories.

Figure G

Reuel, a PhD student in the Stanford Intelligent Systems Laboratory, told TechRepublic in an email: “There are currently no reporting requirements, nor do we have robust evaluations that would allow us to confidently say that a model is safe if it passes those evaluations in the first place.”

Without standardisation in this area, the risk that some untrustworthy AI models may slip through the cracks and be integrated by businesses increases. “Developers might selectively report benchmarks that positively highlight their model’s performance,” the report added.

Reuel told TechRepublic: “There are multiple reasons why a harmful model can slip through the cracks. Firstly, no standardised or required evaluations making it hard to compare models and their (relative) risks, and secondly, no robust evaluations, specifically of foundation models, that allow for a solid, comprehensive understanding of the absolute risk of a model.”

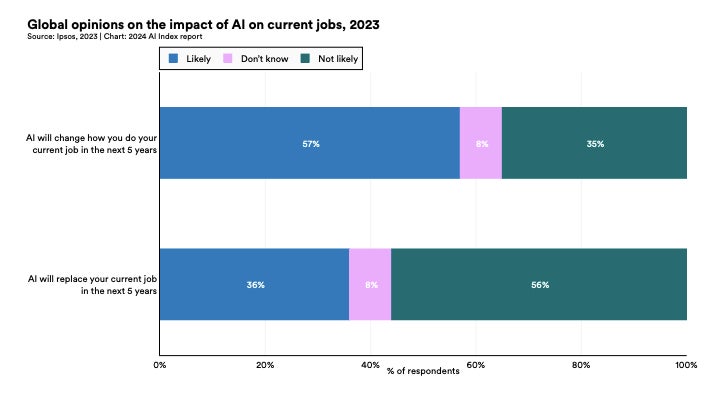

The report also tracked how attitudes towards AI are changing as awareness increases. One survey found that 52% express nervousness towards AI products and services, and that this figure had risen by 13% over 18 months. It also found that only 54% of adults agree that products and services using AI have more benefits than drawbacks, while 36% are fearful it may take their job within the next five years (Figure H).

Figure H

Other surveys referenced in the AI Index Report found that 53% of Americans currently feel more concerned about AI than excited, and that the joint most common concern they have is its impact on jobs. Such worries could have a particular impact on employee mental health when AI technologies start to be integrated into an organisation, which business leaders should monitor.

SEE: The 10 Best AI Courses in 2024

TechRepublic’s Ben Abbott covered this trend from the Stanford report in his article about building AI foundation models in the APAC region. He wrote, in part:

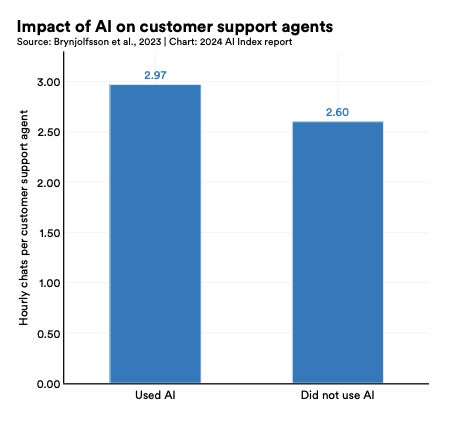

“The dominance of the U.S. in AI continued throughout 2023. Stanford’s AI Index Report released in 2024 found 61 notable models had been released in the U.S. in 2023; this was ahead of China’s 15 new models and France, the biggest contributor from Europe with eight models (Figure I). The U.K. and European Union as a region produced 25 notable models — beating China for the first time since 2019 — while Singapore, with three models, was the only other producer of notable large language models in APAC.”

Figure I